JavaScript is a very popular technology. Most websites have at least one element that is generated by JavaScript.

I remember the first JavaScript SEO experiments by Bartosz Goralewicz back in 2017 when he showed Google had MASSIVE issues with indexing and ranking JavaScript websites.

Although Google went through a long journey, now in 2024 Google still has issues with JavaScript websites.

It can cause problems both with indexing and ranking.

Let’s start with indexing. Issues with rendering can cause problems with:

- Crawled currently not indexed (most common indexing problem)

- Duplicate, Google chose different canonical than the user

- Duplicate without user-selected canonical

Suprised? Let me introduce the vicious cycle of JavaScript SEO:

Step 1: Problems with rendering: If Google can’t properly render your content, then Google will judge your page based on just a part of your content.

Step 2: Then Google will wrongly(!) classify your content as low quality and put it under Crawled currently not indexed or Soft 404.

Another risk: if your JavaScript dependency is high, and rendering fails, then all that Google can see is boilerplate content and may classify your page as duplicate and won’t index it.

It’s a ranking problem too

Is it only an indexing problem? Of course not! When Google has problems with rendering your page, then Google judges your content based on just a small part of the content. As a consequence, it will will rank suboptimally.

So it’s both a ranking and indexing problem.

In this guide I will show you how to identify problems with JavaScript rendering to let you avoid both ranking and indexing issues.

Step 1: find pages with the highest JS dependency using ZipTie

The first step is to find pages with the highest JavaScript dependency. You can do it using ZipTie.dev.

Assuming you audited your website using ZipTie with JavaScript mode on, you need to click on “Choose columns”:

Then tick the “JS dependency” column;

Then the final step is to find URLs with the highest JS dependency. To do this, sort by “JS dependency” descending.

Then choose a sample of URLs you want to analyze. If you have an e-commerce store, select a sample:

- Product pages

- Product listing pages

- blogposts

For each of the groups, try to select URLs with the highest JS dependency, because this will increase your chances of spotting the issue with JavaScript SEO.

Step 2: Identify elements generated by JavaScript (using WWJD)

Now we know which URLs have the highest JS dependencies as well as having selected a sample of URLs to be checked.

Now we can check which elements are generated by JavaScript. For this, we can use WWJD (What would JavaScript Do).

For the purpose of this article, let’s check the homepage of Angular.io.

And WWJD will show you the output with two versions. On the left, is the version with JavaScript disabled, and on the right is the version with JavaScript enabled:

You can then compare them visually.

As you can see JavaScript is fully responsible for generating content. With JavaScript switched off the only content that appears is the phrase: “The modern web developer’s platform” and “this website requires JavaScript”.

So in this step we identified content generated by JavaScript. Now we can save the notes with parts of the content generated by JavaScript:

- “Deliver web apps with confidence”

- “The web development framework for building the future“

- “Loved by millions”

- “Build for everyone”

Check if Google can render your JavaScript content (using Google Search Console)

We already know the impact of JavaScript on our website.

The next step is to use the URL Inspection tool in Google Search Console to see how Google renders your content.

In Google Search Console click on URL inspection:

And then paste the URL you want to check. It will take a few moments to get a response from Google Search Console.

Finally, Google will tell you if a page is indexed. Depending on this, you will have to follow a slightly different algorithm. But don’t worry, it’s quite easy.

If the page is indexed:

If the page is indexed (you can see a tick: “URL is on Google”), click on: “View crawled page”.

If the page is not indexed:

If the page is not indexed, instead of clicking on “View crawled page”, you need to click on: “Test live URL” beforehand.

And then, finally, you will be able to click on “View crawled page”:

Checking the code rendered by Google

Now it’s time for the most important part.

Once we click on View crawled page, then we can click on the HTML tab to see how Google rendered the code.

Type CTRL F (Or Command F for Mac) and type each crucial sentence from your page to ensure it appears here. Do you remember how we identified crucial sentences using WWJD (What would JavaScript Do)?

Make sure important elements are accessible to Google.

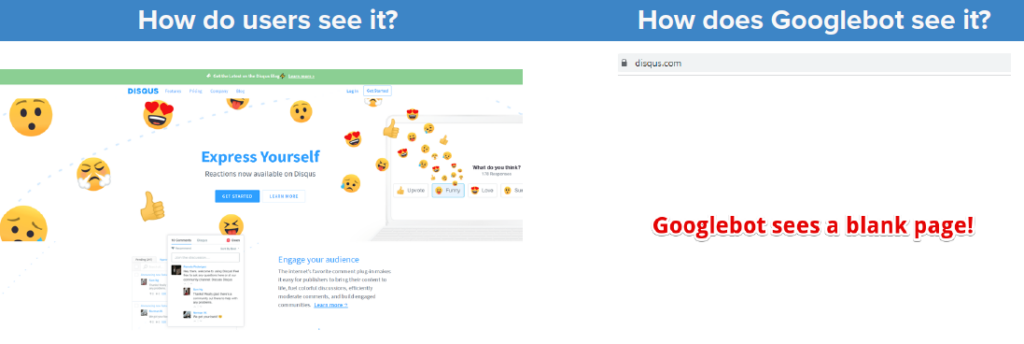

To illustrate the problem I like to use the Disqus.com case.

Users get a fully-featured website while Google is… getting an empty page. This is something that you will notice by using the URL Inspection tool in Google.

Edit: Disqus.com fixed this issue. However, such an issue was apparent on Disqus.com for over 4 years.

When you should check how Google renders your page

Now I’ve taught you how to check if Google can properly render your website.

But the question is: when should you check it? There are a few common scenarios:

- You want to thoroughly audit your website

- You had ranking / indexing drops

- Some of your pages rank sub-optimally.

Geeky note: Live test vs checking indexed version

Some of the more advanced people will be thinking about whether they should use Live test or check indexed version.

- Live test is used to check if Google is technically able to render JavaScript.

- The indexed version checks if Google ACTUALLY indexed JavaScript.

So I am more of a fan of the indexed version check.

Live check can be helpful if you need a screenshot (such a feature is not available in the indexed version)

Wrapping up

Even now, in 2026 it’s very important to check if Google can properly render your content.

This can help you diagnose crawling, ranking and indexing issues.