With Google AI Overviews, ChatGPT, and Perplexity dominating how people find information, traditional Google rankings only tell part of the story. Today, success means being mentioned, referenced, and cited by AI chatbots.

ZipTie, a specialized AI search monitoring tool, has developed metrics to track exactly that – your brand’s visibility across multiple AI-generated responses.

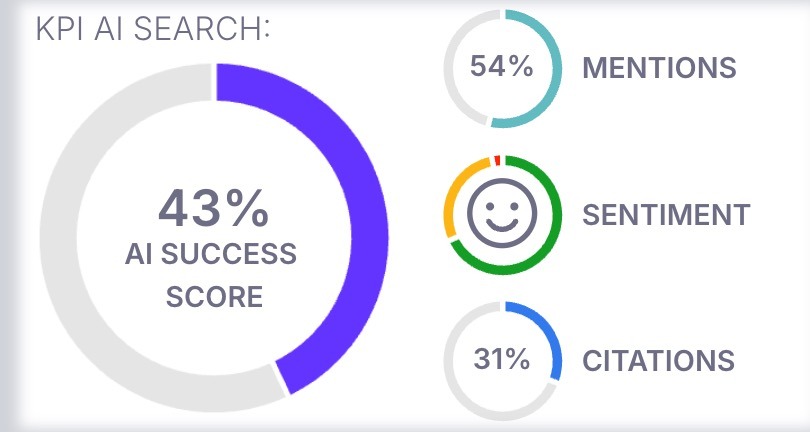

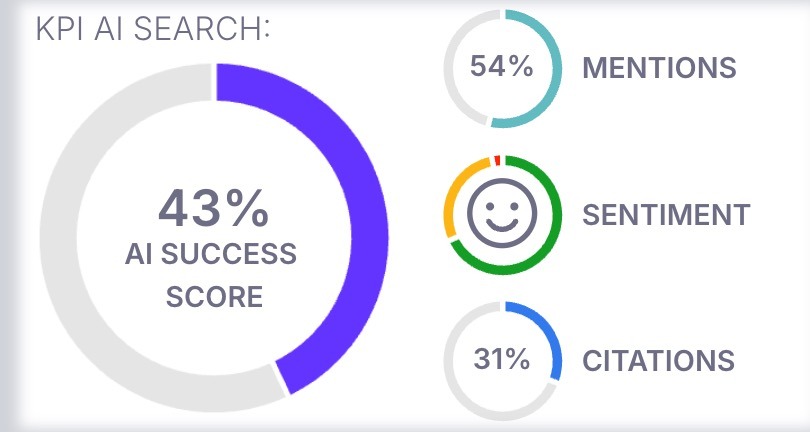

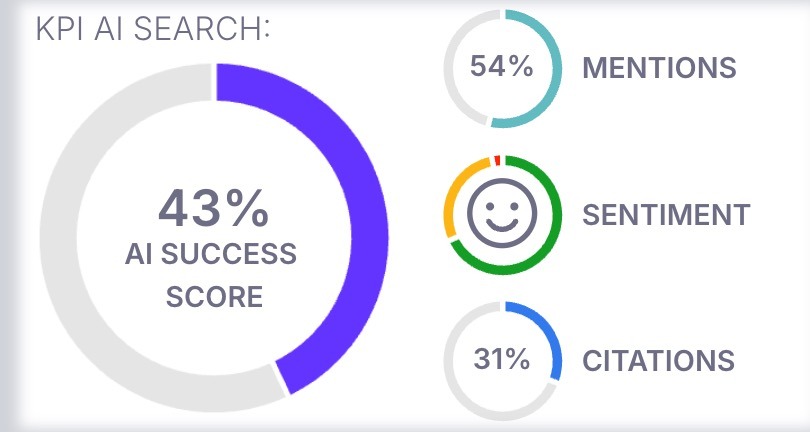

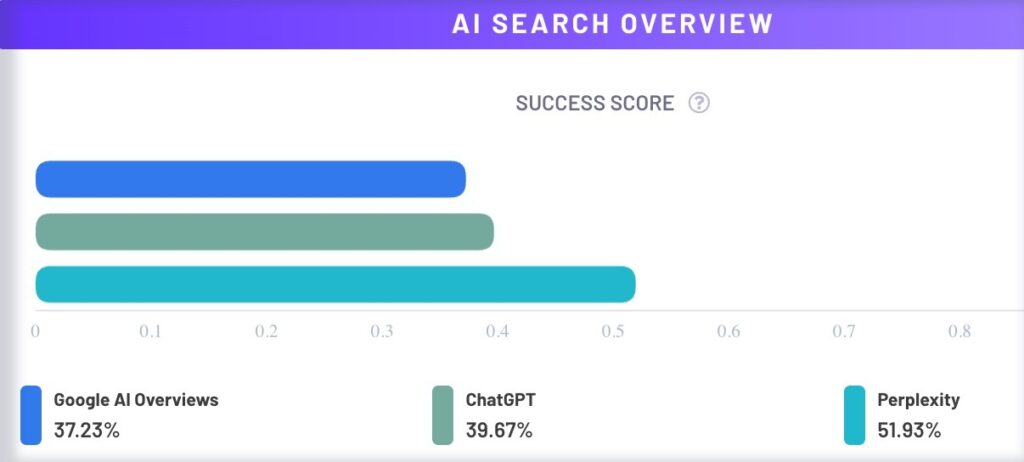

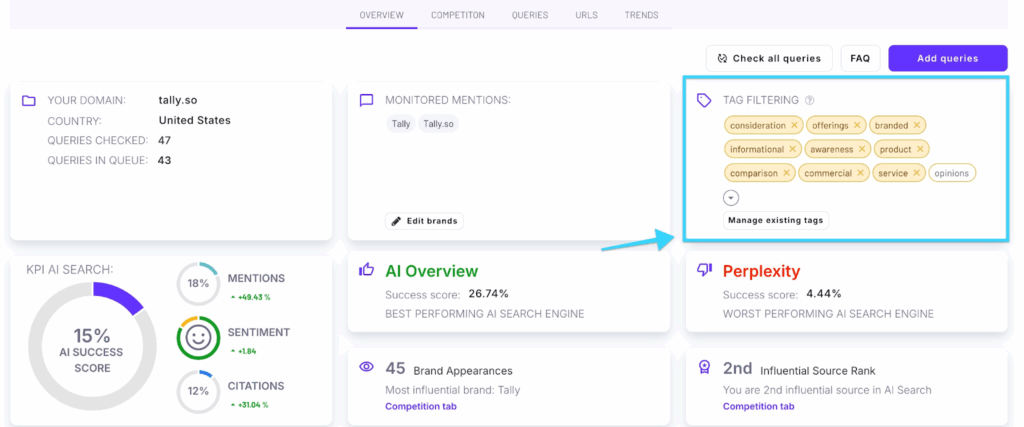

The AI Success Score is ZipTie’s solution for measuring your performance across major AI platforms: AI Overviews, ChatGPT, and Perplexity.

It’s a single, clear number that shows how well your business appears in AI search results – and more importantly, where to focus your efforts for maximum impact.

Instead of developing different signals from different AI tools, ZipTie’s AI Success Score provides unified performance tracking across:

This consolidated view makes it easy to see how your business performs in AI answers.

ZipTie’s AI Success Score evaluates three key elements of AI search performance:

How often do AI tools mention your brand? This metric tracks your direct presence in AI responses, including references to your company, products, or services.

It’s not enough to just be mentioned – context matters. The score tracks whether mentions are positive, negative, or neutral. Positive mentions significantly increase your chances of winning customers.

Citations measure how often AI search engines cite your website as a source. This shows whether your content is seen as authoritative enough to influence AI-generated answers.

Together, these three metrics paint a complete picture of your brand’s AI search success.

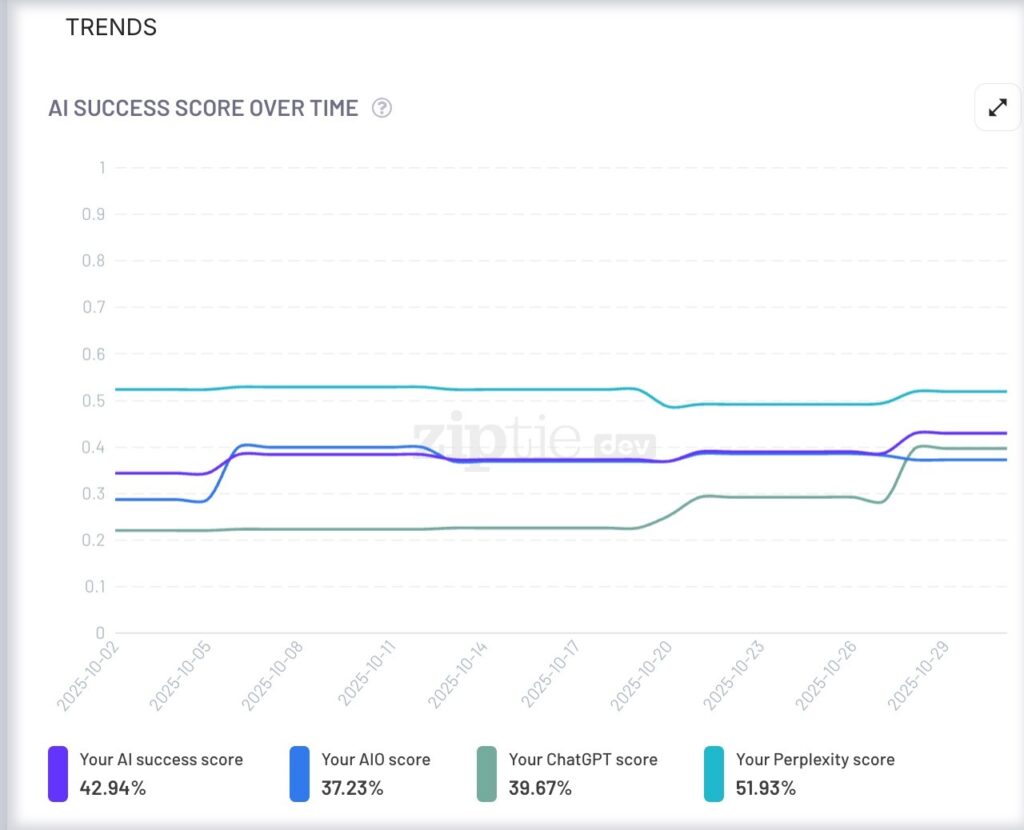

ZipTie lets you monitor your performance over time through the Trends tab, where you can track mentions, sentiment, and citations as they evolve.

ZipTie offers flexible data views at different levels:

Project Level: See your overall AI success in a single score – your big-picture performance.

Platform Level: Compare how you perform across Google AI Overviews, ChatGPT, and Perplexity. You might discover you’re strong in Google but weak in ChatGPT—or vice versa.

Category Level: Using ZipTie’s automatic query categorization, identify which topic categories you dominate and which need work.

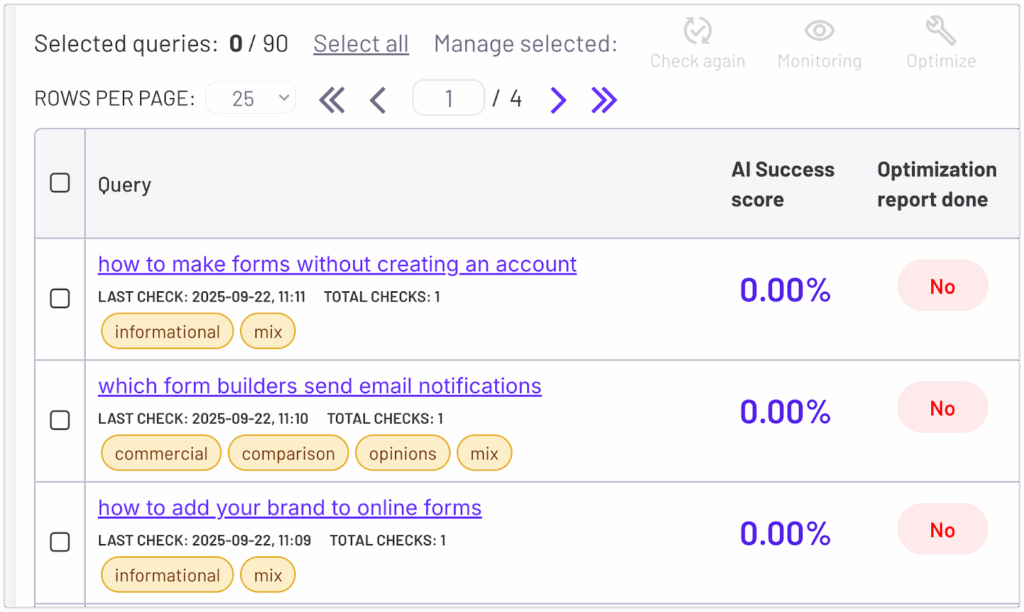

Query Level: Drill down to individual queries to see exactly where you’re succeeding and where you need to improve.

Once you have your AI Success Score, look for patterns:

Ask diagnostic questions. For example:

These are just starting points. The real value comes from analyzing your data, identifying patterns, and asking: What can I do to improve this score?

ZipTie provides several tools to help you improve:

The journey starts with understanding your AI Success Score, finding patterns in your data, and taking targeted action to improve your visibility where it matters most.

At the top of your ziptie.dev/ dashboard you will find an insights tab, you’ll see a quick overview of your AI performance, including:

Scroll down to find detailed insights about your AI search performance. Here’s what makes this feature special:

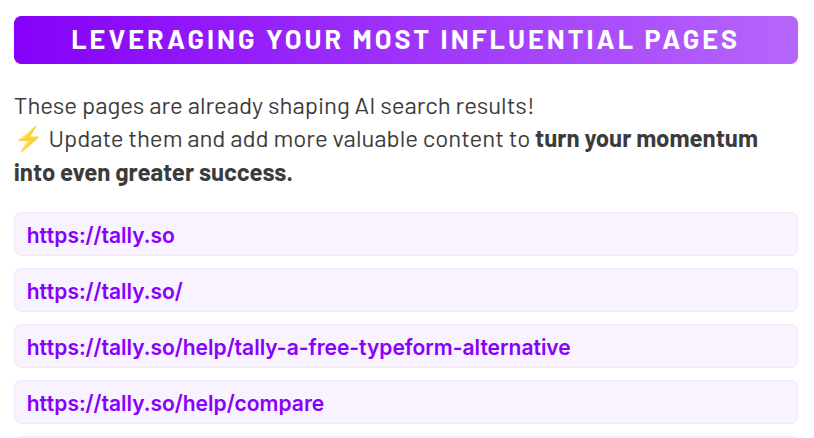

We identify the pages on your website that have the biggest impact on AI search results. These are your golden opportunities — since AI engines already trust these pages, updating them with fresh, valuable content can significantly boost your visibility.

Real Success Story: I discovered something fascinating while optimizing ZipTie’s presence in the AI Search monitoring space. One of our most influential sources was a two-year-old article that was completely outdated! After updating it with current information, AI Overviews, ChatGPT, and Perplexity started featuring the refreshed content in their responses within just a few hours.

The takeaway? Even old content can become incredibly powerful with a simple update.

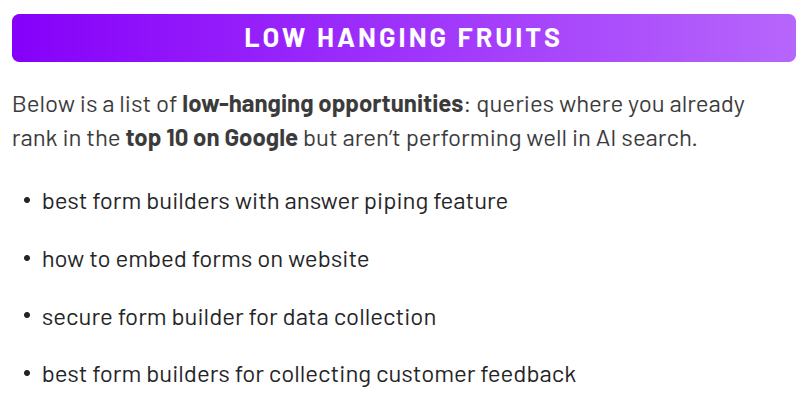

We show you queries where you already rank in Google’s top 10 but aren’t performing well in AI search. These represent quick wins — small optimizations that can lead to big improvements.

Reddit conversations significantly influence what AI search engines display. We identify the most impactful Reddit threads related to your industry, so you can engage strategically and strengthen your brand’s AI search presence.

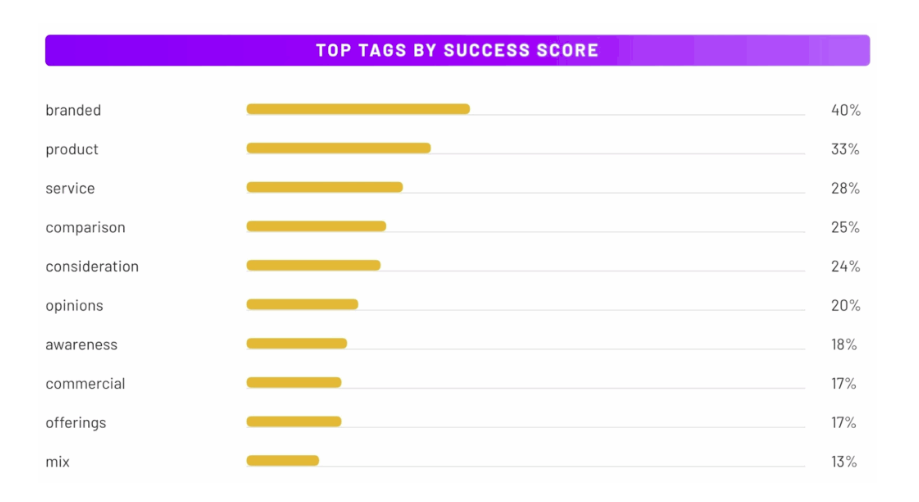

In the Overview tab, you’ll find our “Top Tags by AI Success Score” feature. This shows you which categories of search queries are performing best for your brand, making it easier to identify trends and opportunities in your data.

We’ve been busy making ZipTie more useful for your business. The new Insights tab was just the beginning – here’s what else we’ve built to help you succeed in AI search.

Know What Content Actually Works in AI search

We dove deep into some of the most successful projects to find content performance patterns. Instead of keeping these insights to ourselves, we turned them into a practical guide you can use right away.

Get Specific Steps to Improve Your Content

Our new content optimization module takes the guesswork out of improvement. It shows you exactly what changes to make in your content to rank better in AI search results. No more wondering what’s working – you’ll have a clear roadmap. In our article you will see how to optimize your content for AI step by step.

Ready to turn your data into actionable insights? Dive into the new AI Insights feature and start optimizing your AI search success today.

We’ve offered a query categorization system for some time, but it required manual setup. While this worked well for technical users, we wanted something more accessible for everyone.

That’s why we developed an automated query categorization system. Now you can quickly see how well your content performs in AI search, with automatic tags that organize your data by topic, intent, and stage of user journey – no manual work required.

You can use the tag filters to check how well you’re performing in each category.

ZipTie’s Overview tab gives you a high-level view of your AI search performance across all tags.

In this example, the business we analyzed doesn’t perform well in AI search, struggling especially with bottom-funnel questions – showing low scores for awareness stage queries and commercial/offering content.

By looking at the tag performance you can quickly identify your strengths and weaknesses before drilling down into specific areas that need attention.

From there, switch to the Queries tab to see the complete breakdown. Here you’ll find every individual query with its automatically assigned tags, giving you the detailed view you need to understand exactly what users are searching for and how each query is performing.

For data enthusiasts, we provide comprehensive CSV exports with complete information about all your AI search checks.

These detailed reports (which include tag data and AI success scores for every AI search engine) make it easy to analyze ZipTie data in Excel, data analytics tools, or integrate into your custom workflows.

Here’s my shortlist:

These are articles or landing pages that clearly explain what your products or services do and how they help customers solve their problems.

This type of content teaches LLMs and AI search engines about your offerings and why they matter.

You should focus on the features or benefits most important to your customers. This helps in two ways:

Example:

Let’s say you sell Toyota vehicles, and safety is an important factor for AI systems when recommending cars.

By clearly showcasing Toyota’s advanced safety features — like Toyota Safety Sense™ or top crash-test ratings — you increase the chances that AI will choose your brand when someone asks: “What are the safest family cars in 2025?” or “What are the best family cars in 2025?”.

Develop articles that show how your product or service compares to alternatives, highlighting your unique advantages.

Remember Apple’s iconic ads comparing Macs to Windows PCs?

A clear comparison written on your website can be just as effective in AI search because it helps LLMs understand your competitive advantage.

Example:

If you’re Toyota and want to highlight your strengths compared to Honda, you could create a piece like: “10 Reasons Toyota Outperforms Honda in Reliability and Safety”

Of course, this type of content might not fit naturally on a corporate site like Toyota’s main domain. In such cases you could:

This way you increase the chances of directly influencing what AI search engines learn about your brand — and ultimately, what they recommend to users when someone asks: “Which SUV is more reliable, Toyota or Honda?” or simply Which is better: Toyota or Honda?

Create “Top 10” or “Best of” pages that list leading tools, services, or products in your industry.

This strategy works well because people (and AI search engines) love organized, easy-to-digest recommendations.

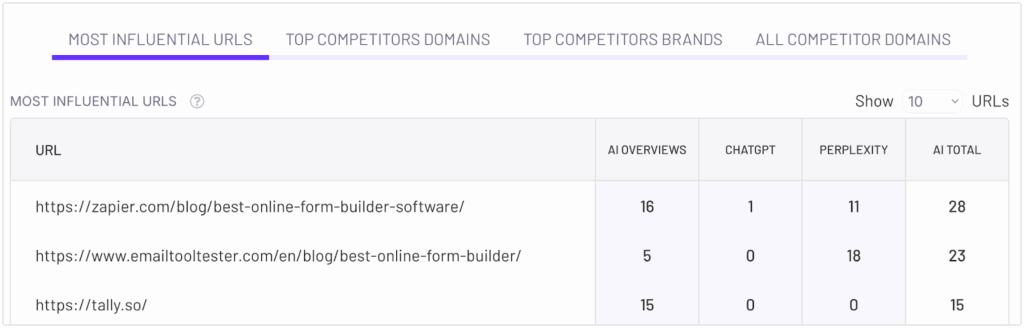

Many successful brands already do this. For example I can see Zapier.com is commonly on most influential sources for AI search engines for many niches. They frequently create “lists of best X”, two examples below:

You can take a similar approach for your industry.

For instance, a Toyota blog could publish:

“Best SUVs for Families in 2025” or

“Top Hybrid Cars for City Driving”

This type of content is extremely effective at building authority and visibility in AI search results.

Build comprehensive answers to the most common questions your customers ask.

FAQ pages aren’t just for user convenience — they’re also a powerful way to train LLMs about your products and services.

The clearer and more direct your answers, the more likely AI will recommend your brand.

Pro Tip:

Some questions have a much bigger influence on whether AI recommends your brand — especially those about safety, trust, and purchase decisions.

If Toyota’s safety reputation is a key factor, create FAQs that directly address these concerns, such as:

By providing detailed answers backed by data, ratings, and real examples, you help both users and AI search engines understand why Toyota is a smart, safe choice.

This FAQ strategy works best when paired with your feature and benefit pages. Together, they make it more likely that AI will recommend your brand when someone asks: “What are the safest cars for families?” or “Which SUVs have the best reliability?”

Educational content builds trust and authority, helping both users and AI search engines see your brand as an expert.

To be most effective, create content for every stage of the buyer’s journey:

Introduce key concepts to people new to your product or industry.

Why it matters: Your brand can be present from the very beginning of a customer journey.

Answer deeper questions as users evaluate choices.

Why it matters: It helps you be successful for queries like “Best SUV for a family.”

Overcome last-minute objections and guide purchase decisions.

Help customers succeed and stay loyal.

Why it matters: Shows ongoing value and strengthens brand reputation.

This framework creates a full content ecosystem, teaching both humans and AI why your brand is the right choice at every step.

This guide is a general observation on what works in AI search, based on multiple projects I’ve seen, regardless of niche.

However, I recommend using ZipTie to find ideas for content by looking at the most influential content for your niche.

Once ZipTie finishes AI visibility analysis, go to the most influential URL to see which content is already successful. Below is an example – two most influential sources are listings of the best online form builders, published on Zapier and Emailtotester.

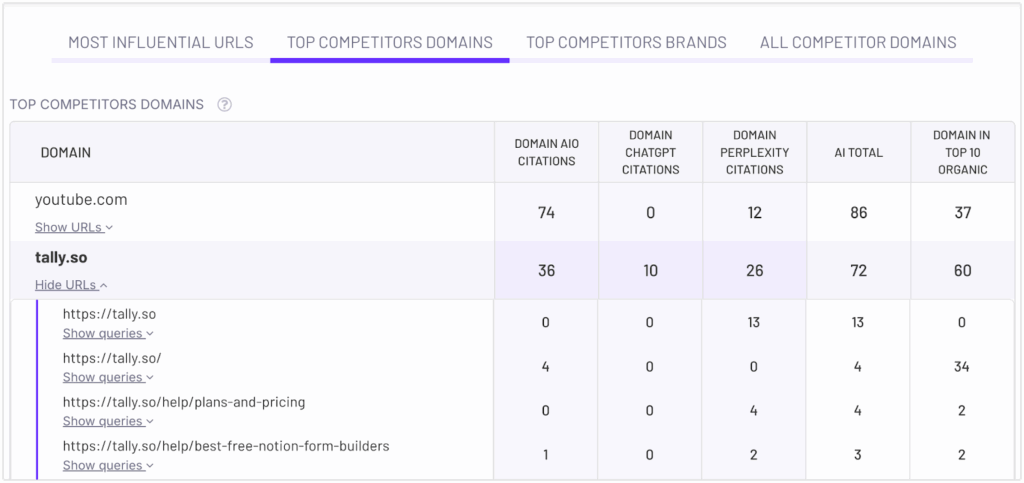

You can also visit the tab with top competitor domains to see which of your competitor’s content is already successful in AI search. If it works for them, there is a high chance that it can also work for you.

The key to success in AI search is creating content that genuinely helps your readers.

When your content provides real value, answers critical questions, and builds trust, it naturally attracts the right audience – and positions your brand for visibility in AI-driven results.

I’m one of the co-founders of ZipTie, an AI search tracking platform. Rather than write another generic sales pitch, I want to share the criteria I’d use to evaluate ANY AI search tracking tool – including our own competitors. These are the hard-learned lessons from building in this space.

Here’s my honest framework for evaluating these tools – use it to assess ZipTie, our competitors, or any new entrant in the space.

Traditional SEO was “simple”: rank high for keywords, get traffic. AI search is different – it combines website authority with brand power. Your tracking tool needs to measure both citations (when your website influences AI search results) and mentions (when your brand is “recommended” to the users by AI).

Most tools focus heavily on one or the other. The best ones treat them as equally important metrics that work together.

How ZipTie handles this: We track both mentions and citations as core metrics, recognizing that AI search success requires both strong brand presence and authoritative content. On top of that, we also track brand sentiment to let you understand how positively AI search talks about your business.

I’ve seen tools that track 15+ different AI platforms. Impressive? Maybe. Useful? Probably not.

The reality is that three platforms drive the majority of meaningful AI search traffic:

Yes, other platforms exist, but spreading your optimization efforts across obscure LLMs is usually a waste of time.

Look for tools that help you focus rather than chase every shiny new AI model.

ZipTie focuses on the most important AI search platforms: Google’s AI Overviews, ChatGPT and Perplexity.

Here’s something most people don’t realize: AI search queries are fundamentally different from traditional SEO keywords. They’re longer, more conversational, and incredibly hard to predict manually.

I spent months trying to manually generate query lists for our clients. It was painful and incomplete. The breakthrough came when we built automated business query generation at ziptie.dev/ – suddenly we were discovering relevant searches our customers never would have found on their own.

A good AI search monitoring tool should give you many options – you can check your own selection of queries, or you can use their query generator.

When it comes to analyzing your success in AI search engines you need executive-focused reporting and the granular data for actually optimizing content. Tools that only provide high-level summaries leave you guessing about what to fix. Tools that only show granular data overwhelm you with information.

Additionally, the tool not only allows these higher levels of analysis, but also allows users to dig deeper and look at particular products, services, or website sections.

The sweet spot is having project-level overviews, topic-level groupings, and individual query performance – all in one platform. ZipTie allows you to do this – you can set separate projects and organize queries by tags.

I cringe when I see tools promoting “LLMs.txt file generation” as a major feature. There’s zero evidence this impacts AI search results, but it sounds technical enough to impress clients.

Similarly, many tools show “approximate AI search volume.” In my experience, this data often isn’t actionable and can sometimes lead you in the wrong direction when making optimization decisions.

I understand why these features get built – they look impressive in demos and help differentiate tools in a crowded market. But from a practical standpoint, they don’t typically address the core problems businesses face when optimizing for AI search.

The Accuracy Problem

Most founders building AI search tools haven’t grappled with how hard accurate tracking actually is. To properly track AI search, they need to avoid personalization, track in proper geolocation, and avoid shadowbans (i.e. it’s quite common that AI Overviews shows the AI answer to real users but not to bots which makes delivering accurate results quite challenging.

When evaluating tools, ask specific questions about their data collection methodology. Vague answers usually indicate shortcuts that compromise accuracy.

The AI search space moves fast, and today’s perfect dashboard becomes tomorrow’s limiting interface. Any tool that doesn’t offer robust CSV export or API access is betting their current interface will meet all your future needs forever.

While ziptie.dev/ doesn’t yet allow for API calls, ziptie.dev/ offers robust CSV export and we understand the importance of eventually allowing for API calls.

The key is ensuring you’re never completely locked into one way of analyzing your data.

Raw data is overwhelming. What you need is a clear score that tells you: “You’re succeeding here, failing there, and here’s what to prioritize.”

At ziptie.dev/ we built our AI Success Score around three factors: mention frequency, sentiment, and citation strength.

Traditional SEO competitors are obvious – they’re ranking above you in Google. AI search competitors are hidden. They might have terrible traditional SEO but dominate AI responses through strong brand presence or strategic content positioning.

The tool of your choice should reveal these emerging competitors before they become obvious threats.

Knowing that Reddit influences 40% of AI responses in your niche is actionable intelligence. Knowing that “social media” influences responses is useless generalization.

Look for tools that get specific about which exact domains and content types drive AI responses in your industry.

ziptie.dev/ can give you this information in a simple, easy to read and convenient table that is updated on the go.

This is perfect to help you prioritize outreach efforts. Additionally, you can easily see which pages of your competitors are most successful in AI search – this way you can “copy” their strategy to increase your success in AI search.

Should you choose a specialized AI search tool or an established SEO platform adding AI features?

Having competed against both, I believe specialization wins in fast-moving markets. Traditional SEO tools have existing architectures, customer expectations, and product roadmaps that make pivoting to AI search challenging. They’re building AI features as add-ons to their core business.

Specialized AI search tools are building their entire product around this new paradigm. They can move faster, make more radical interface decisions, and focus completely on solving AI search problems.

But specialization only matters if the specialist is established enough to survive and iterate. A six-month-old tool built by a solo developer might be specialized, but obviously, it’s also risky.

Ziptie was long a technical SEO tool, but 2 years ago we made a strategic decision to go 100% AI. This made us the first tool to offer AI Overviews tracking, which is a part of the biggest AI search engine.

When evaluating any AI search tool (including ours), ask:

I’ll be transparent – ZipTie aligns well with these criteria, but every business has different needs and priorities. Use this framework as a starting point to build your own evaluation checklist based on what matters most for your specific situation.

Google is experimenting with the Search Generative Experience (SGE). Currently, access is limited to beta-testers. However, if it becomes global, we can undoubtedly call it the biggest change in Google’s history.

Nevertheless, it comes with significant problems. This article is an open letter highlighting the key issues and calls for fair and professional practices from Google.

With the new changes, Google displays a box generated by artificial intelligence at the top of its search results, as seen on the screenshot below:

You can then ask SGE follow-up questions, just like talking to a friend. If you have used ChatGPT before, you might think of it as a mix of ChatGPT and traditional Google search. Technically, Google does not use ChatGPT but its own system, but you get the idea.

It’s a massive change for two reasons.

If you want to read more about SGE, you can refer to my article 10 things you should know about Google’s Search Generative Experience.

Now, let’s move on to the promised list of the 7 deadly sins of SGE

Hold your horses, as there are several issues that need to be addressed.

SGE, with its current issues, poses a huge risk for fair information distribution. It can spread misinformation or conflate facts with personal opinions, which can have serious consequences on buyer decisions and even political choices. On top of that, Google is not fair with the original sources of information. Shame on you, Google!

It is crucial for Google to address these issues to maintain user trust and provide accurate information.

Let’s delve into the specific problems and expectations.

I have noticed many instances where Google presents summaries on various topics without properly attributing the sources.

This lack of attribution is unfair to content creators who deserve recognition for their work. For instance, Google does not show attribution in the case of an informational query like “What is JavaScript SEO?”

It is essential for Google to provide proper credit and references when using content from external sources. Failing to do so is considered unethical and should not be accepted.

Google’s SGE relies on content from the internet, and unfortunately, it often presents identical information. I consider this plagiarism.

Let’s consider an example: when searching for the “best way to clean white sneakers at home,” Google proposes a method using soda and vinegar. However, this method is almost identical to the one presented by Famous Footwear.

The extra layer of this problem comes from the fact that this fragment is almost fully copied from Famous Footwear, again with no attribution to the source.

To make it easier for you to spot the “differences” I compiled them in the table below:

| The text offered by Google | Original Source |

| Mix one tablespoon of hot water with one tablespoon of white vinegar and one tablespoon of baking soda | Mix one tablespoon of hot water with one tablespoon of white vinegar and one tablespoon of baking soda |

| Let the shoes air for several hours before brushing and shaking off the dried paste | Leave shoes to air for several hours before brushing and shaking off the dried paste |

The lack of originality in the information provided by Google raises concerns about plagiarism.

What we can see here is that SGE is lacking rules to make sure they do not directly duplicate the content found on the web.

Let me show you another example.

I asked Google to give me famous quotes by Jose Mourinho.

Google’s SGE took content from BrainlyQuote without even changing the order of the quotes.

It is a 1:1 plagiarism. Shame on you, Google!

To ensure ethical practices, Google should refrain from directly copying content and instead strive to provide unique and valuable insights.

When users search for offers or news, they expect the most up-to-date information. However, Google’s SGE sometimes displays outdated data. For instance, a query for “The Witcher 3 best price” yields an outdated lowest price.

Although the price might have been accurate in the past, it no longer reflects the current market situation.

We expect Google to give a warning like this: “The information was last updated on XXX.XX.XXXX. For the current information, refer to the sources.”

Geographical location plays a significant role in determining the relevance of information. Users expect search results to align with their location-specific needs. However, Google’s SGE sometimes displays information from different regions, which may not be applicable or useful. For example, searching for “free bets” in the USA yields results based on Nigerian information, including local currency.

To further emphasize the importance of geographical localization:

Different locations have different tax and legal systems, so you may receive advice that is totally irrelevant to your specific situation.

Local customs vary, such as the different New Year dates observed in Georgia, Israel, Russia, the Republic of Macedonia, Serbia, Montenegro, and Ukraine.

To improve the user experience, Google should consider localizing content and providing region-specific results.

The mechanism behind Google’s product recommendation system is not without flaws. Users expect the “best” recommendation to be based on reliable factors, but this is not always the case.

For instance, when searching for the “best antivirus software,” Google might suggest TrendMicro Antivirus, even though it receives lower ratings than other options (3.4 vs 4.7 average!!!).

As it is not clear how the system works (it is not disclosed in the search results), the system’s reliance on recommendations or potential affiliate commissions raises concerns about biased suggestions.

It is important for Google to refine its recommendation system and prioritize the quality and relevance of suggested products.

This one is a significant issue.

A significant challenge arises when Google’s SGE incorporates opinions and surveys as though they were established facts. The internet is filled with diverse opinions, surveys, and investigations contradicting each other.

And then, when Google paraphrases these opinions and presents them as the top search results, it can lead to misleading information.

For example, a search for “Should I buy a Tesla” presents negative opinions about Tesla’s reliability, leading the user to reconsider their decision.

Hey, if I read that Tesla has the third-world reliability score among all automakers, I would not ever consider buying a Tesla.

It is essential to remember that investigations and surveys are subjective and dependent on various factors.

Google should take precautions to differentiate between opinions and limited investigations and establish common consensus to prevent misinformation.

Now, early adopters see SGE as a nice addition, kind of experimental. Tomorrow, people will treat this as an “executive summary” and may trust it more.

This is a very risky field, as it can:

So we demand that Google be more fair and professional.

One concerning trend is the facilitation of market dominance by major players through Google’s SGE. When users search for specific products like audiobooks or ebooks, Google’s SGE often directs them to purchase from dominant platforms such as Audible or Amazon. This raises questions about fair competition and the influence of affiliate programs.

For instance, when I searched on Google for “Audiobook 7 Habits of Highly Effective People,” it “recommended” me to listen to it on Amazon. I noticed that Google is taking information from Amazon’s affiliate websites.

Amazon’s success and the widespread use of its partner program contribute to this dominance. However, it is worrisome that Google presents information from affiliate websites as if it were an established fact, potentially biasing search results.

We demand that Google strive to promote fair competition and provide unbiased information to users.

Google’s Search Generative Experience (SGE) brings both excitement and concerns.

To maintain user trust and create a more reliable and unbiased search engine, Google needs to address these concerns. They should give proper credit, avoid copying content, provide up-to-date information, improve relevance based on location, refine product recommendations, separate opinions from facts, and promote fair competition.

Hope the article will reach people responsive to Google’s SGE. Issues mentioned in the article need to be resolved.

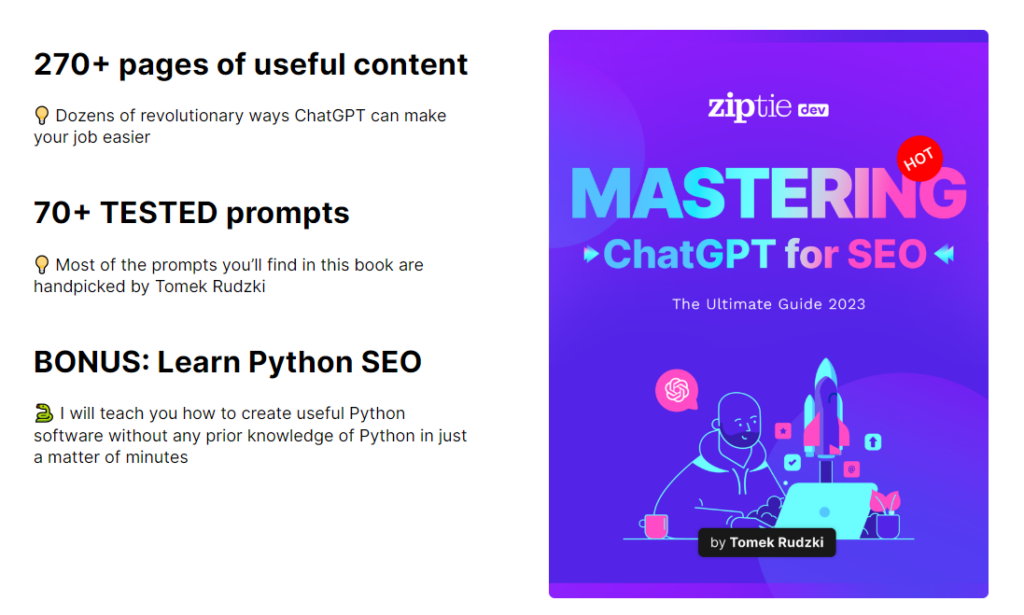

Recently, I released my FREE ebook titled “Mastering ChatGPT for SEO.” It contains over 270 pages of valuable content, with most prompts handpicked by me. You can get it here. 🎉 🎉

The emergence of Artificial Intelligence (A.I.) has the SEO world abuzz about all the implications this has for technology, and what this means for the overall human race. From improving efficiencies tenfold, to helping assist in the creation of new ideas and new directions, the implications of artificial intelligence are numerous and spellbinding.

As positive as A.I. can be for the world, there is a much darker side to the emergence of this new paradigm, as researchers and numerous scholars continue to warn us. So much so, that it could potentially cause the extinction of the human species. In spite of the dark side, however, there are so many bright aspects.

And no field could have been as unprepared for this advanced paradigm as search has been. With Google and Microsoft entering the fray, we have seen transformative changes in the world of search, especially when it comes to Google’s SGE (search generative experience). Powered by A.I., this new user interface creates an all-new way to experience search.

Read on to find out more about how Search Generative Experience will impact Google, and all of its implications for the SEO world.

This is a big change. In this new layout, a text snippet generated by artificial intelligence appears at the top of the organic search results, followed by traditional results. SGE becomes the new “position 0.”

Please look at the example below:

Now let’s examine some sample A.I. search results to see how Google might look in the near future.

With this result, we see the new search generative experience. It’s tailored to the highly localized search query, with locations of the best pizza restaurants on the left, followed by a carousel, and the Google map on the right.

For this query, we are seeing yet another highly localized search experience generated by A.I. Compared to the standard localized search result, which only has a list of places on the left, followed by the map on the right, we now see that the new SGE shows a few more pieces of information.

First, on the left, we have the latest listings of these places, but they are followed by not only the number of star reviews but also show a review snippet. These results are then followed by a carousel on the right, along with the Google map showing the location of these places.

Clearly, Google is aiming to provide not only just the result that caters to the user’s query, but also the most helpful result that caters to the user’s query.

As a query, “iPhone 13” is primarily buyer intent focused. But, with the new SGE, this adds a layer of informative search intent to the results, providing quite the SERP that leverages both intents. The new SGE adds some pros and cons, as well as some people also ask queries below the results. In addition, the carousel that displays shows several resources users could take advantage of to find additional reviews and other information as they please.

With this query, it’s highly buyer-intent focused. But, the SGE provides all the information that a buyer might want to use to find their new best smartphone for $1,000 USD. It includes things like what you might want to consider when purchasing a smartphone, some recommendations for the best smartphone, as well as a carousel that shows top articles throughout the industry.

The query “Google Search Console” by default shows the usual top organic results, followed by “people also ask” questions. With SGE, however, it provides a more comprehensive answer to the question, which keeps people on Google search longer, thereby potentially increasing Google’s own dwell time metrics.

Throughout these experiments, I was able to put together a number of observations about Google’s new search generative experience, and how this will impact search results now and in the future. The ramifications that these observations present for the world of search are significant and are not to be understated.

SGE is displayed for different search intents, including informational, transactional, local, and commercial investigation. However, it’s not available for all queries.

Interestingly, SGE is not displayed for every search query. We don’t know if this is an experimental limitation or a deliberate design choice.

First of all, it’s not available for queries related to recent news. Here’s an example with Barcelona’s soccer results:

There are many queries that are not covered by Google’s A.I., for reasons unknown. For example, queries like “How to prepare chicken salad” or “Stocks to buy in 2023” don’t generate SGE results.

For certain queries, SGE is uncertain whether A.I.-generated results should be shown.

When users want to get the A.I. results, they can click on the “Generate” button. Examples using Samsung and Amazon as the query illustrate this feature below:

Example: Samsung

Example: Amazon

I also noticed that for many other types of queries, Google’s SGE doesn’t generate results by default. One such example includes: “what’s batch-optimized rendering in SEO”:

After more than 20 years of using Google’s search engine, we’re used to typing short queries containing keywords, such as: “Smartphones up to 1000 USD with a good selfie camera”.

The adoption of SGE opens up the possibility of more natural and human patterns of interaction with the search user interface.

| Before: “keyword” query | After: conversational query |

| Query: “Smartphones up to 1000 USD with a good selfie camera”. | Query: “I want to buy a good smartphone up to 1K USD, but I care only about the selfie camera, I don’t care about the main camera quality” |

Will the SGE be able to transform our 20-year-old search behavior?

Here is an example of a conversational search using SGE.

First, I told SGE that I would like to buy a smartphone for up to $1,000.00 USD, with a great rear camera:

Then I asked a follow-up question, narrowing the results to those at an $800.00-$1,000.00 USD cap:

You can ask SGE as many follow-up questions as you want.

However, it seems that SGE isn’t a fully reliable virtual assistant. I asked SGE to summarize results, with more information in a table (like I would ask ChatGPT). It failed:

Google’s blog post announcement says that SGE is an experiment:

“We’re starting with an experiment in Search Labs, called SGE (Search Generative Experience), available on Chrome desktop and the Google App (Android and iOS) in the U.S. (English-only at launch), so we can incorporate feedback and continue to improve the experience over time.”

That means it’s definitely not the final version, and what is ultimately released could be radically different compared to what we see today.

Google needs some time to see if users are happy with the results. Then, they will evaluate and incorporate feedback as it comes in.

We will continue to cover this topic in-depth to help you stay on top of the latest changes.

One major drawback of Google’s SGE is that it often doesn’t attribute sources properly. I quickly noticed two queries: “What is JavaScript SEO” and “Batch optimized rendering,” in which the source is not identified. This means that there are significant issues when it comes to copyright attribution, along with legal issues (which I will not cover here).

Here is an example using the query “What is JavaScript SEO”:

There have been several mentions of this lack of attribution by a number of SEO professionals on social media sites such as Twitter. To say that they are not happy about this is an understatement. Writers and publishers will undoubtedly be negatively impacted by Google using this approach, with the lack of attribution feeling like people won’t know who is writing what articles up-front.

Clearly, the impact and negative issues surrounding the attribution issues with Google’s SGE are strong, and are something that will need to be addressed by Google at some point, if they hope to remain legitimate in the eyes of writers and publishers alike.

Since SGE relies on artificial intelligence, the quality of its results is not peer-reviewed. The results of the SGE are based on content found on the internet, and while some resources are good, others may be of lower quality. This can lead to misinformation, as well as inconsistent quality of information.

Google even openly warns in the search result: “Generative AI is experimental. Info quality may vary”.

For the query “What is the difference between Crawled – currently not indexed” and “Discovered – currently not indexed” I noticed two inaccurate pieces of information:

SGE still struggles with understanding some of the conversational queries. For example, when asking for a short answer to whether I can buy links, SGE wasn’t helpful, resulting in a poor user experience. SGE also thought I was asking about URL shorteners, rather than buying links. Clearly, the SGE needs a lot of work before it will be ready for prime time.

SGE introduces additional waiting times of around 5-7 seconds.

Here is an example: users searching for “best pizza near me now” immediately receive recommendations from the “traditional search”. As a result, they may not be willing to wait for SGE-generated results. See the screenshot below:

Bartosz Goralewicz, CEO of Onely, asked SGE to find working coupon codes for a specific product. SGE generated a long list of websites that offer coupons.